I ran Google's Deepdream on the Mona Lisa with every layer, using 3 caffe models (datasets):

- The BVLC ImageNet dataset,

- The MIT Places dataset,

- The CompCars dataset.

These are the only caffe datasets I’ve been able to find which have inception layers in their deploy.txt. If you know of any others please get in touch (@sprawld)

I ran this on Lucid Dream, a python script I wrote (based on Bat Country). I used default settings (4 octaves, scale: 1.4) for 20 iterations:

python lucid.py --image mona-lisa.jpg

--output img/mona

--base-model google,place,cars

--iterations 20

--layer all

There's a table at the bottom with all the results. It can also show dreams with 2, 5 and 10 iterations (it may take a while to load).

The deepdream neural network was originally designed to perform a useful function - to recognise objects in an image. It was only later some crazy genius at Google decided to loop the network's impression of the scene back in on itself.

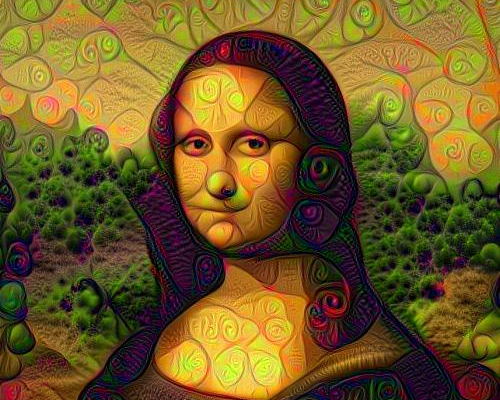

There are 2 types of layer. The first like the primary visual cortex* - it segments colour and shapes in the scene. As such the effects are mostly swirling image effects. They don't vary much between the different datasets.

Layers in the primary cortex are all those beginning conv, pool or inception_3. With the exception of: pool3/3x3_s2, pool4/3x3_s2 and inception_3b/output.

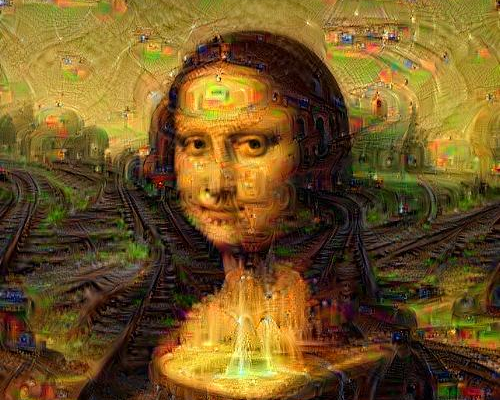

In the secondary layers, the neural network is recognising objects in the scene, and attempting to draw them. Layers in the secondary cortex are the exceptions I mentioned and all layers beginning inception_4 or inception_5.

What deepdream draws in the secondary cortex is much more varied. Each picture can trigger different parts of the image recognition network, and dreams can will go off in a wildly different directions.

However, this is all based on what the network can recognise. So each dataset gives the dream its own style.

* simile, not neuroscience

The original dataset draws on images from ImageNet. ImageNet contains a lot of pictures of animals - particularly dogs - and facial features. Very often it will fill the image with eyes and dog-slugs. The result can be a mess, the dream going off in several directions at once. That being said, the dataset recognises the widest variety of pictures. With the use of guide images, the dream can be more directed.

The MIT Places dataset creates dreams like watercolour paintings. The dataset is far more limited - it only contains pictures of places and landscapes - but this restriction makes for a far more coherent dream. Pictures are transformed into castles and pagodas stretching off into the distance.

The car dataset is the cubist artist to MIT Places' landscapes. This may be a too restrictive dataset, turning pictures into a limited set of machinery and sci-fi pod things. Still each dataset comes with it's unique style, and I'm hungry for more.

Here's the full set of images. You can switch on or off different datasets, show a range of iterations (2-20), and change the image size. Click on a picture to see it full sized.

Also have a look at Mona Lisa with guided images.